Introduction to dataRetrieval

Introduction

In this ~45 minute introduction, the goal is:

Introduce the modern

dataRetrievalworkflows.The intended audience is someone:

New to

dataRetrievalHas some R experience

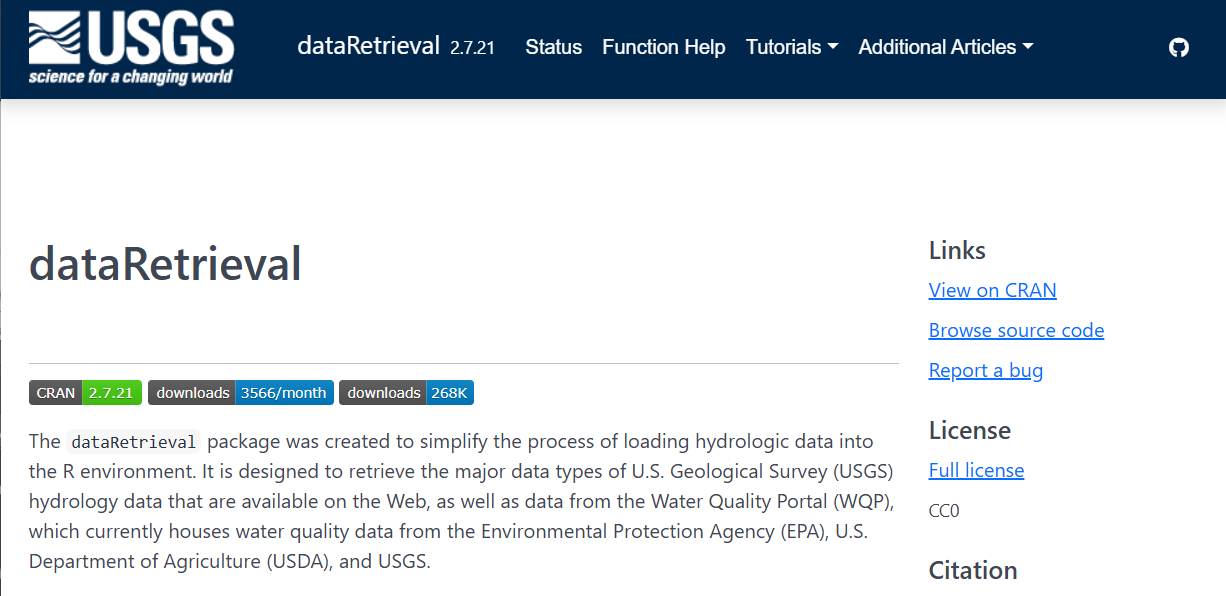

dataRetrieval: R-package for US water data

USGS Water Data APIs *

Surface water levels

Groundwater levels

Site metadata

Peak flows

Rating curves

Discrete water-quality data

Water Quality Portal (WQP) Data

Discrete water-quality data

USGS and non-USGS data

Installation

dataRetrieval is available on the Comprehensive R Archive Network (CRAN) repository. To install dataRetrieval on your computer, open RStudio and run this line of code in the Console:

Then each time you open R, you’ll need to load the library:

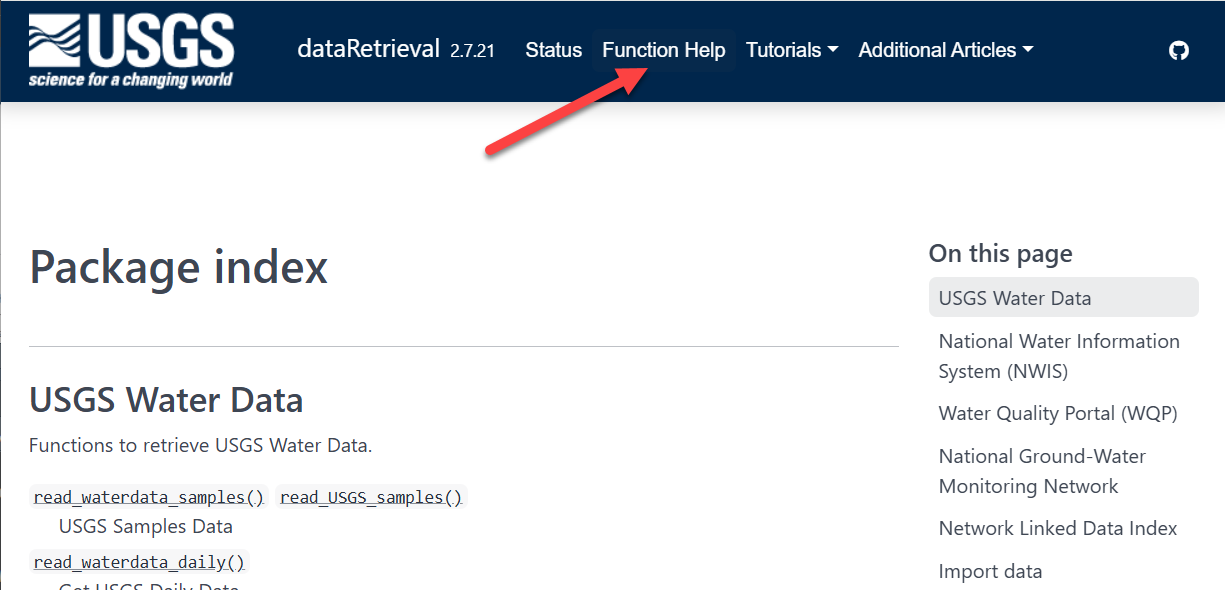

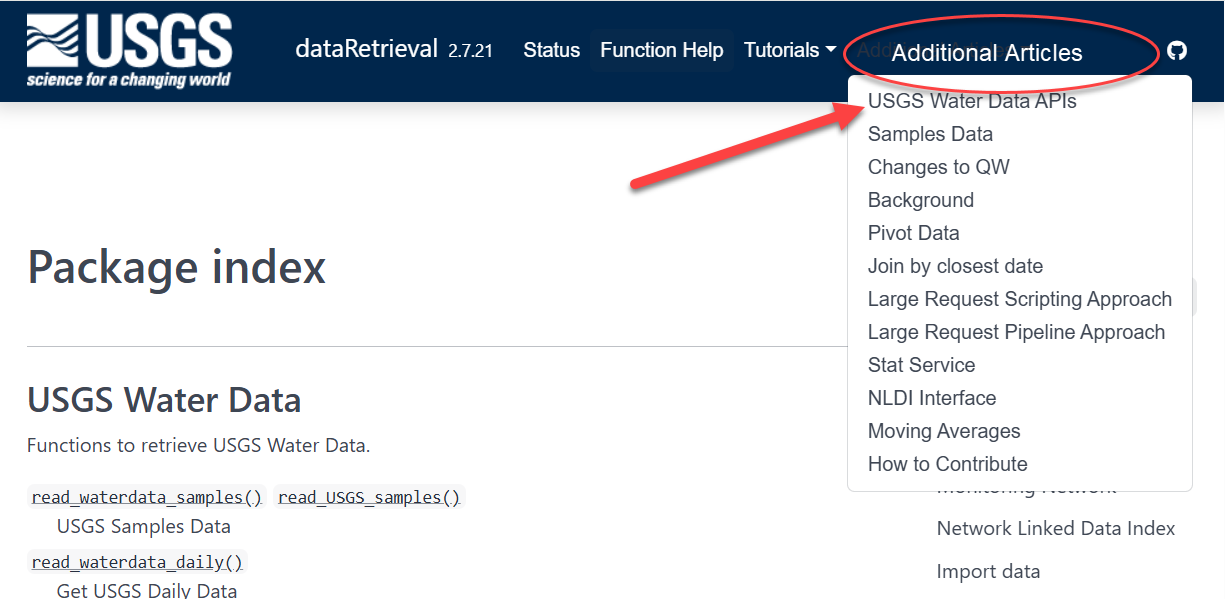

dataRetrieval: External Documentation

dataRetrieval: External Documentation

dataRetrieval: External Documentation

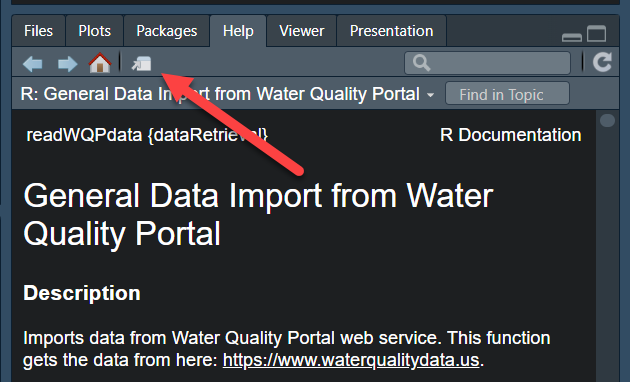

Documentation within R: function help pages

Within R, you can call help files for any dataRetrieval function:

Within Python, you can call help for any dataretrieval function:

Help on function get_daily in module dataretrieval.waterdata.api:

get_daily(monitoring_location_id: 'str | Iterable[str] | None' = None, parameter_code: 'str | Iterable[str] | None' = None, statistic_id: 'str | Iterable[str] | None' = None, properties: 'str | Iterable[str] | None' = None, time_series_id: 'str | Iterable[str] | None' = None, daily_id: 'str | Iterable[str] | None' = None, approval_status: 'str | Iterable[str] | None' = None, unit_of_measure: 'str | Iterable[str] | None' = None, qualifier: 'str | Iterable[str] | None' = None, value: 'str | Iterable[str] | None' = None, last_modified: 'str | None' = None, skip_geometry: 'bool | None' = None, time: 'str | Iterable[str] | None' = None, bbox: 'list[float] | None' = None, limit: 'int | None' = None, filter: 'str | None' = None, filter_lang: 'FILTER_LANG | None' = None, convert_type: 'bool' = True) -> 'tuple[pd.DataFrame, BaseMetadata]'

Daily data provide one data value to represent water conditions for the

day.

Throughout much of the history of the USGS, the primary water data available

was daily data collected manually at the monitoring location once each day.

With improved availability of computer storage and automated transmission of

data, the daily data published today are generally a statistical summary or

metric of the continuous data collected each day, such as the daily mean,

minimum, or maximum value. Daily data are automatically calculated from the

continuous data of the same parameter code and are described by parameter

code and a statistic code. These data have also been referred to as “daily

values” or “DV”.

Parameters

----------

monitoring_location_id : string or iterable of strings, optional

A unique identifier representing a single monitoring location. This

corresponds to the id field in the monitoring-locations endpoint.

Monitoring location IDs are created by combining the agency code of

the agency responsible for the monitoring location (e.g. USGS) with

the ID number of the monitoring location (e.g. 02238500), separated

by a hyphen (e.g. USGS-02238500).

parameter_code : string or iterable of strings, optional

Parameter codes are 5-digit codes used to identify the constituent

measured and the units of measure. A complete list of parameter

codes and associated groupings can be found at

https://help.waterdata.usgs.gov/codes-and-parameters/parameters.

statistic_id : string or iterable of strings, optional

A code corresponding to the statistic an observation represents.

Example codes include 00001 (max), 00002 (min), and 00003 (mean).

A complete list of codes and their descriptions can be found at

https://help.waterdata.usgs.gov/code/stat_cd_nm_query?stat_nm_cd=%25&fmt=html.

properties : string or iterable of strings, optional

A vector of requested columns to be returned from the query.

Available options are: geometry, id, time_series_id,

monitoring_location_id, parameter_code, statistic_id, time, value,

unit_of_measure, approval_status, qualifier, last_modified

time_series_id : string or iterable of strings, optional

A unique identifier representing a single time series. This

corresponds to the id field in the time-series-metadata endpoint.

daily_id : string or iterable of strings, optional

A universally unique identifier (UUID) representing a single version of

a record. It is not stable over time. Every time the record is refreshed

in our database (which may happen as part of normal operations and does

not imply any change to the data itself) a new ID will be generated. To

uniquely identify a single observation over time, compare the time and

time_series_id fields; each time series will only have a single

observation at a given time.

approval_status : string or iterable of strings, optional

Some of the data that you have obtained from this U.S. Geological Survey

database may not have received Director's approval. Any such data values

are qualified as provisional and are subject to revision. Provisional

data are released on the condition that neither the USGS nor the United

States Government may be held liable for any damages resulting from its

use. This field reflects the approval status of each record, and is either

"Approved", meaning processing review has been completed and the data is

approved for publication, or "Provisional" and subject to revision. For

more information about provisional data, go to:

https://waterdata.usgs.gov/provisional-data-statement/.

unit_of_measure : string or iterable of strings, optional

A human-readable description of the units of measurement associated

with an observation.

qualifier : string or iterable of strings, optional

This field indicates any qualifiers associated with an observation, for

instance if a sensor may have been impacted by ice or if values were

estimated.

value : string or iterable of strings, optional

The value of the observation. Values are transmitted as strings in

the JSON response format in order to preserve precision.

last_modified : string, optional

The last time a record was refreshed in our database. This may happen

due to regular operational processes and does not necessarily indicate

anything about the measurement has changed. You can query this field

using date-times or intervals, adhering to RFC 3339, or using ISO 8601

duration objects. Intervals may be bounded or half-bounded (double-dots

at start or end).

Examples:

* A date-time: "2018-02-12T23:20:50Z"

* A bounded interval: "2018-02-12T00:00:00Z/2018-03-18T12:31:12Z"

* Half-bounded intervals: "2018-02-12T00:00:00Z/.." or

"../2018-03-18T12:31:12Z"

* Duration objects: "P1M" for data from the past month or

"PT36H" for the last 36 hours

Only features that have a last_modified that intersects the value of

datetime are selected.

skip_geometry : boolean, optional

This option can be used to skip response geometries for each feature.

The returning object will be a data frame with no spatial information.

Note that the USGS Water Data APIs use camelCase "skipGeometry" in

CQL2 queries.

time : string, optional

The date an observation represents. You can query this field using

date-times or intervals, adhering to RFC 3339, or using ISO 8601

duration objects. Intervals may be bounded or half-bounded (double-dots

at start or end). Only features that have a time that intersects the

value of datetime are selected. If a feature has multiple temporal

properties, it is the decision of the server whether only a single

temporal property is used to determine the extent or all relevant

temporal properties.

Examples:

* A date-time: "2018-02-12T23:20:50Z"

* A bounded interval: "2018-02-12T00:00:00Z/2018-03-18T12:31:12Z"

* Half-bounded intervals: "2018-02-12T00:00:00Z/.." or

"../2018-03-18T12:31:12Z"

* Duration objects: "P1M" for data from the past month or

"PT36H" for the last 36 hours

bbox : list of numbers, optional

Only features that have a geometry that intersects the bounding box are

selected. The bounding box is provided as four or six numbers,

depending on whether the coordinate reference system includes a vertical

axis (height or depth). Coordinates are assumed to be in crs 4326. The

expected format is a numeric vector structured: c(xmin,ymin,xmax,ymax).

Another way to think of it is c(Western-most longitude, Southern-most

latitude, Eastern-most longitude, Northern-most longitude).

limit : numeric, optional

The optional limit parameter is used to control the subset of the

selected features that should be returned in each page. The maximum

allowable limit is 50000. It may be beneficial to set this number lower

if your internet connection is spotty. The default (NA) will set the

limit to the maximum allowable limit for the service.

filter, filter_lang : optional

Server-side CQL filter passed through as the OGC ``filter`` /

``filter-lang`` query parameters. See

:mod:`dataretrieval.waterdata.filters` for syntax, auto-chunking,

and the lexicographic-comparison pitfall.

convert_type : boolean, optional

If True, converts columns to appropriate types.

Returns

-------

df : ``pandas.DataFrame`` or ``geopandas.GeoDataFrame``

Formatted data returned from the API query.

md: :obj:`dataretrieval.utils.Metadata`

A custom metadata object

Examples

--------

.. code::

>>> # Get daily flow data from a single site

>>> # over a yearlong period

>>> df, md = dataretrieval.waterdata.get_daily(

... monitoring_location_id="USGS-02238500",

... parameter_code="00060",

... time="2021-01-01T00:00:00Z/2022-01-01T00:00:00Z",

... )

>>> # Quick "show me the last week" idiom (ISO 8601 duration)

>>> df, md = dataretrieval.waterdata.get_daily(

... monitoring_location_id="USGS-02238500",

... parameter_code="00060",

... time="P7D",

... )

>>> # Get approved daily flow data from multiple sites

>>> df, md = dataretrieval.waterdata.get_daily(

... monitoring_location_id=["USGS-05114000", "USGS-09423350"],

... approval_status="Approved",

... time="2024-01-01/..",

... )

>>> # Pull only rows whose underlying record was refreshed in the

>>> # last 7 days — handy for incremental ETL polling

>>> df, md = dataretrieval.waterdata.get_daily(

... monitoring_location_id="USGS-02238500",

... parameter_code="00060",

... last_modified="P7D",

... )

>>> # Chain queries: pull all stream sites in a state, then their

>>> # daily discharge for the last week. The site list can be hundreds

>>> # of values long — the request is transparently chunked across

>>> # multiple sub-requests so the URL stays under the server's byte

>>> # limit. Combined output looks like a single query.

>>> sites_df, _ = dataretrieval.waterdata.get_monitoring_locations(

... state_name="Ohio",

... site_type="Stream",

... )

>>> df, md = dataretrieval.waterdata.get_daily(

... monitoring_location_id=sites_df["monitoring_location_id"].tolist(),

... parameter_code="00060",

... time="P7D",

... )dataRetrieval Updates

Are you a seasoned dataRetrieval user?

Here are resources for recent major changes:

What’s New?

There’s been a lot of changes to dataRetrieval over the past year. If you’d like to see an overview of those changes, visit: Changes to dataRetrieval

Biggest changes:

NWIS servers will be shut down, so all

readNWISfunctions will eventually stop workingread_waterdatafunctions are modern and should be used when possibleThe “USGS Water Data APIs” are the new home for USGS data

USGS Water Data API Token

The Water Data APIs limit how many queries a single IP address can make per hour

You can run new

dataRetrievalfunctions without a tokenYou might run into errors quickly. If you (or your IP!) have exceeded the quota, you will see:

! HTTP 429 Too Many Requests.

• You have exceeded your rate limit. Make sure you provided your API key from https://api.waterdata.usgs.gov/signup/, then either try again later or contact us at https://waterdata.usgs.gov/questions-comments/?referrerUrl=https://api.waterdata.usgs.gov for assistance.USGS Water Data API Token

Request a USGS Water Data API Token: https://api.waterdata.usgs.gov/signup/

Save it in a safe place (KeePass or other password management tool)

Add it to your .Renviorn file as API_USGS_PAT.

Restart R

Check that it worked by running (you should see your token printed in the Console):

See next slide for a demonstration.

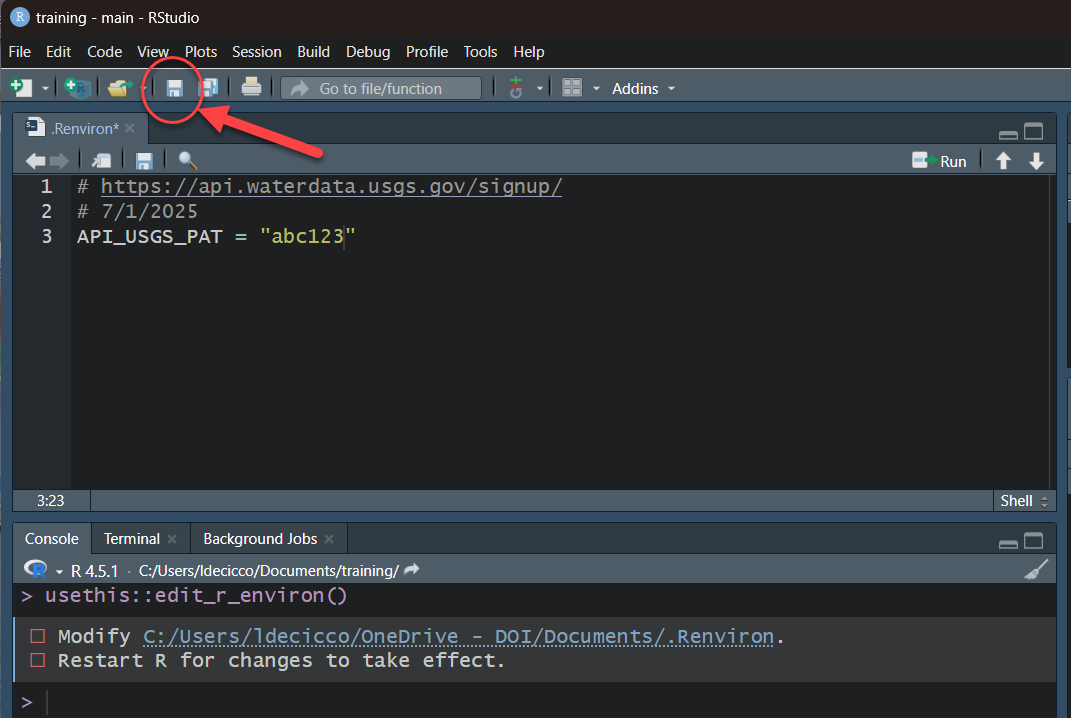

USGS Water Data API Token: Example

My favorite method to do add your token to .Renviron is to use the usethis package. Let’s pretend the token sent you was “abc123”:

- Run in R:

- Add this line to the file that opens up:

Save that file using the save button

Restart R/RStudio.

Run after restarting R:

USGS Water Data API Token: Example

After save and restart, check that it worked by running:

USGS Basic Retrievals

The USGS uses various codes for basic retrievals. These codes can have leading zeros, therefore they need to be a character surrounded in quotes (“00060”).

- Site ID (often 8 or 15-digits)

- Parameter Code (5 digits)

- Full list:

read_waterdata_parameter_codes()

- Full list:

- Statistic Code (for daily values)

- Full list:

read_metadata("statistic-codes")

- Full list:

USGS Basic Retrievals Parameter and Statistic Codes

Here are some examples of a few common codes:

|

|

Let’s Go!

We’re going walk through 3 retrievals:

Workflow 1: Daily Data

Uses the new USGS Water Data API

Modern data access point going forward

Workflow 2: Discrete Data

Uses new USGS Samples Data

Modern data access point going forward

Workflow 3: Continuous Data

Uses the new USGS Water Data API

Modern data access point going forward

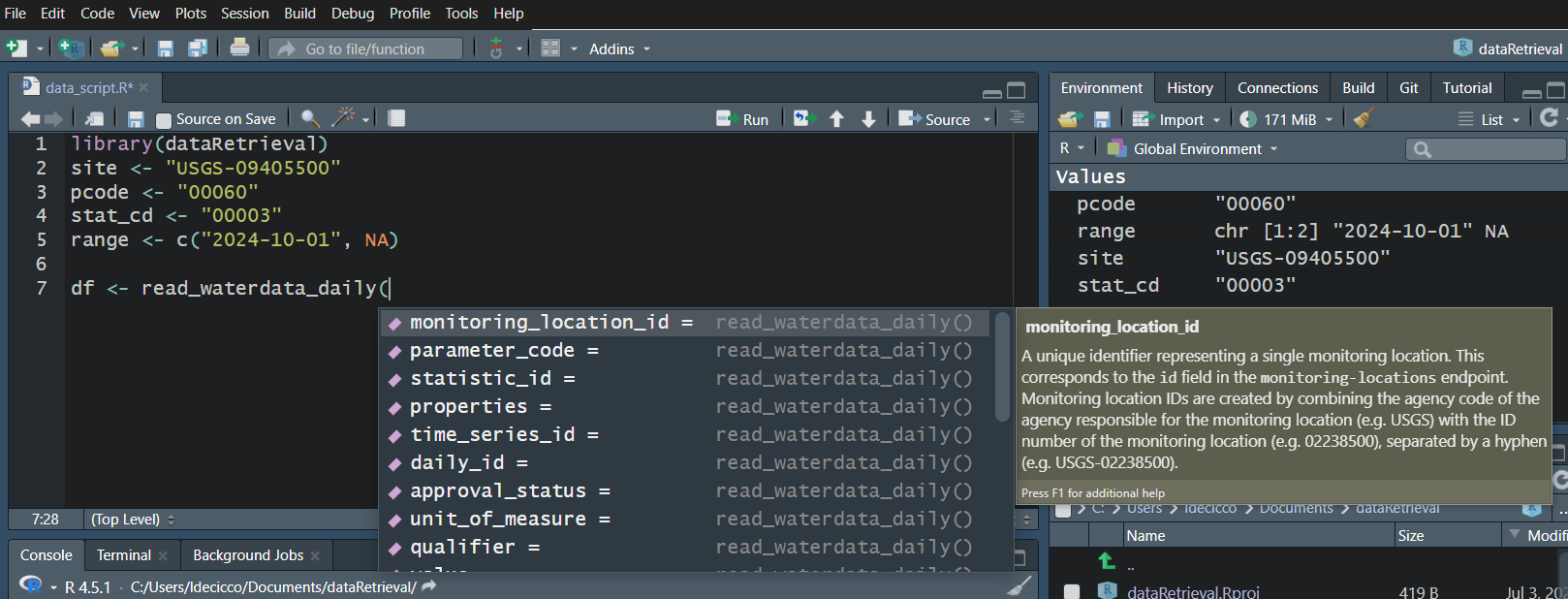

Workflow 1: Daily data for known site

Let’s pull daily mean discharge data for site “USGS-0940550”, getting all the data from October 10, 2025 onward.

Requesting:

https://api.waterdata.usgs.gov/ogcapi/v0/collections/daily/items?f=json&lang=en-US&monitoring_location_id=USGS-09405500¶meter_code=00060&statistic_id=00003&time=2025-10-01%2F..&limit=50000Remaining requests this hour:1923 [1] 244Workflow 1: Look at Daily Data

In RStudio, click on the data frame in the upper right Environment tab to open a Viewer.

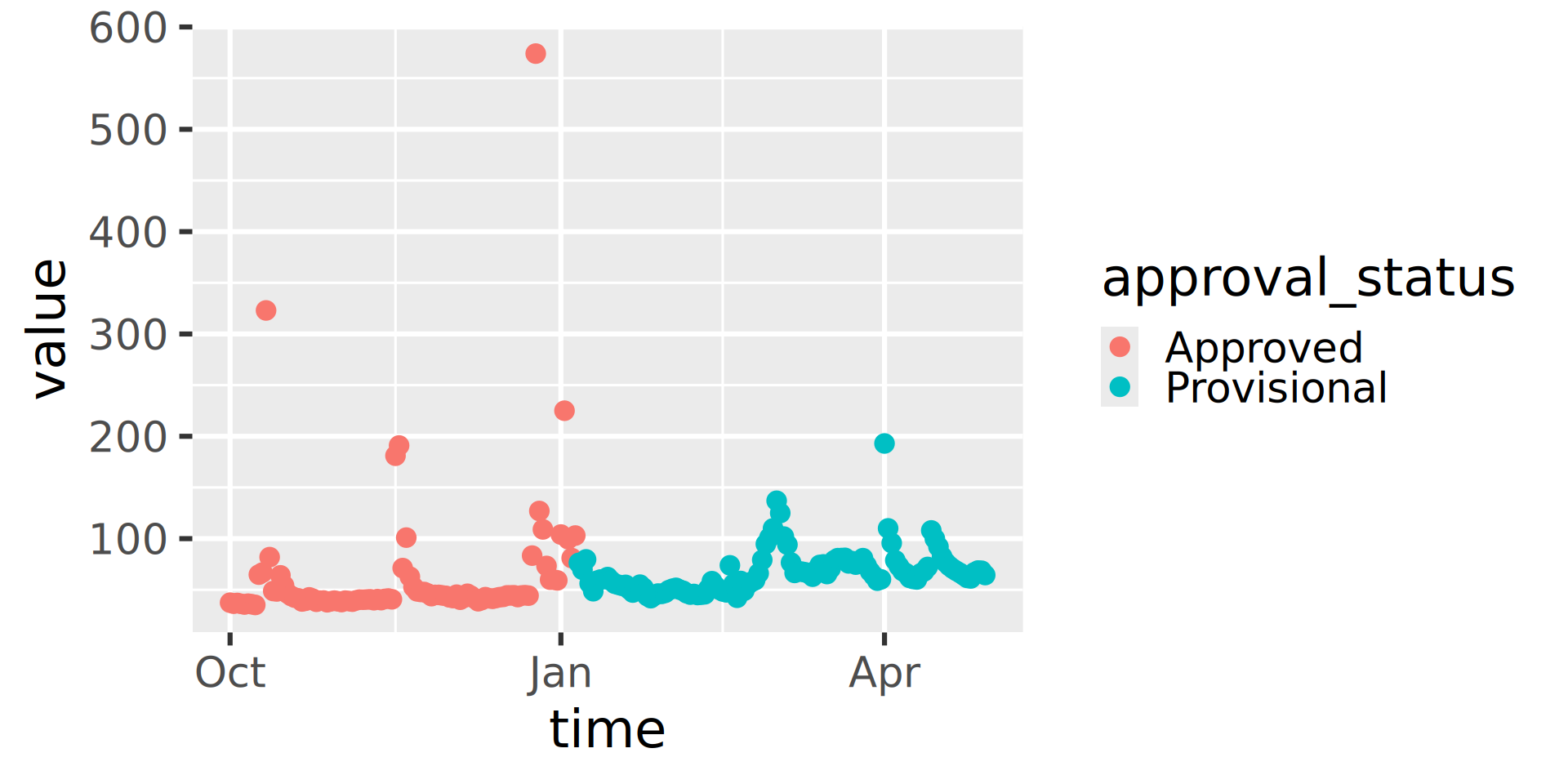

Workflow 1: Plot Daily Data

Let’s use ggplot2 to visualize the data.

Let’s use matplotlib to visualize the data.

Water Data API Notes: Argument input

Use your “tab” key!

Water Data API Notes: Arguments

When you look at the help file for the new functions, you’ll notice there are lots of possible inputs (arguments).

You DO NOT need to (and should not!) specify all of these parameters.

However, also consider what happens if you leave too many things blank. What do you suppose will be returned here?

Since no list of sites or bounding box was defined, ALL the daily data in ALL the country with parameter code “00060” and statistic code “00003” will be returned.

Water Data API Notes: time input

The “time” argument has a few options:

A single date (or date-time): “2024-10-01” or “2024-10-01T23:20:50Z”

A bounded interval: c(“2024-10-01”, “2025-07-02”)

Half-bounded intervals: c(“2024-10-01”, NA)

Duration objects: “P1M” for data from the past month or “PT36H” for the last 36 hours

Here are a bunch of valid inputs:

# Ask for exact times:

time = "2025-01-01"

time = as.Date("2025-01-01")

time = "2025-01-01T23:20:50Z"

time = as.POSIXct(

"2025-01-01T23:20:50Z",

format = "%Y-%m-%dT%H:%M:%S",

tz = "UTC"

)

# Ask for specific range

time = c("2024-01-01", "2025-01-01") # or Dates or POSIXs

# Asking beginning of record to specific end:

time = c(NA, "2024-01-01") # or Date or POSIX

# Asking specific beginning to end of record:

time = c("2024-01-01", NA) # or Date or POSIX

# Ask for period

time = "P1M" # past month

time = "P7D" # past 7 days

time = "PT12H" # past hoursWorkflow 2: Discrete data for known site

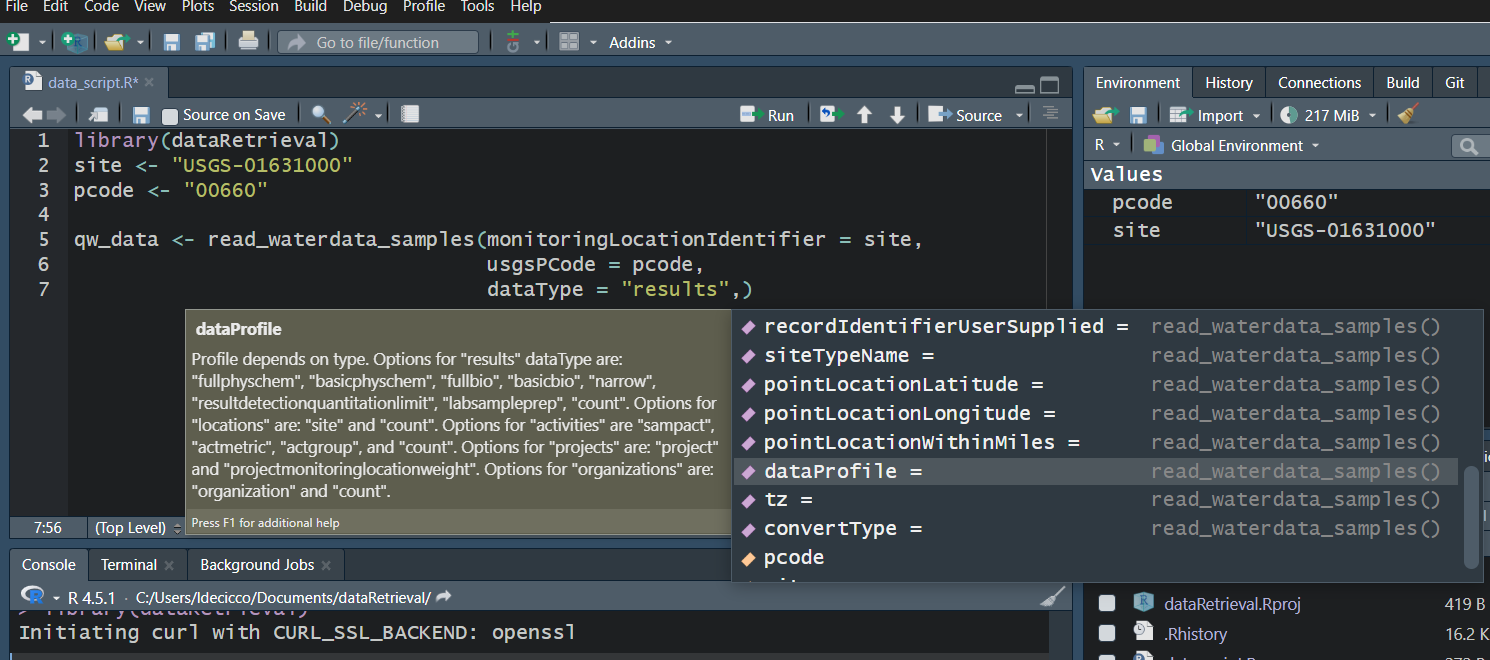

Use your “tab” key!

Workflow 2: Discrete data for known site

Let’s get orthophosphate (“00660”) data from the Shenandoah River at Front Royal, VA (“USGS-01631000”).

GET: https://api.waterdata.usgs.gov/samples-data/results/basicphyschem?mimeType=text%2Fcsv&monitoringLocationIdentifier=USGS-01631000&usgsPCode=00660[1] 104R generates a few POSIXct columns to combine date, time, timezone information.

That’s a LOT of columns returned. We won’t look at them here, but you can use View in RStudio to explore on your own.

USGS Samples Data Notes: Data Types and Profiles

- There are 2 arguments that dictate what kind of data is returned

- “dataType” defines what kind of data comes back

- “dataProfile” defines what columns from that type come back

- There are 2 parameters that dictate what kind of data is returned

- “service” defines what kind of data comes back

- “profile” defines what columns from that type come back

Data Types and Profiles

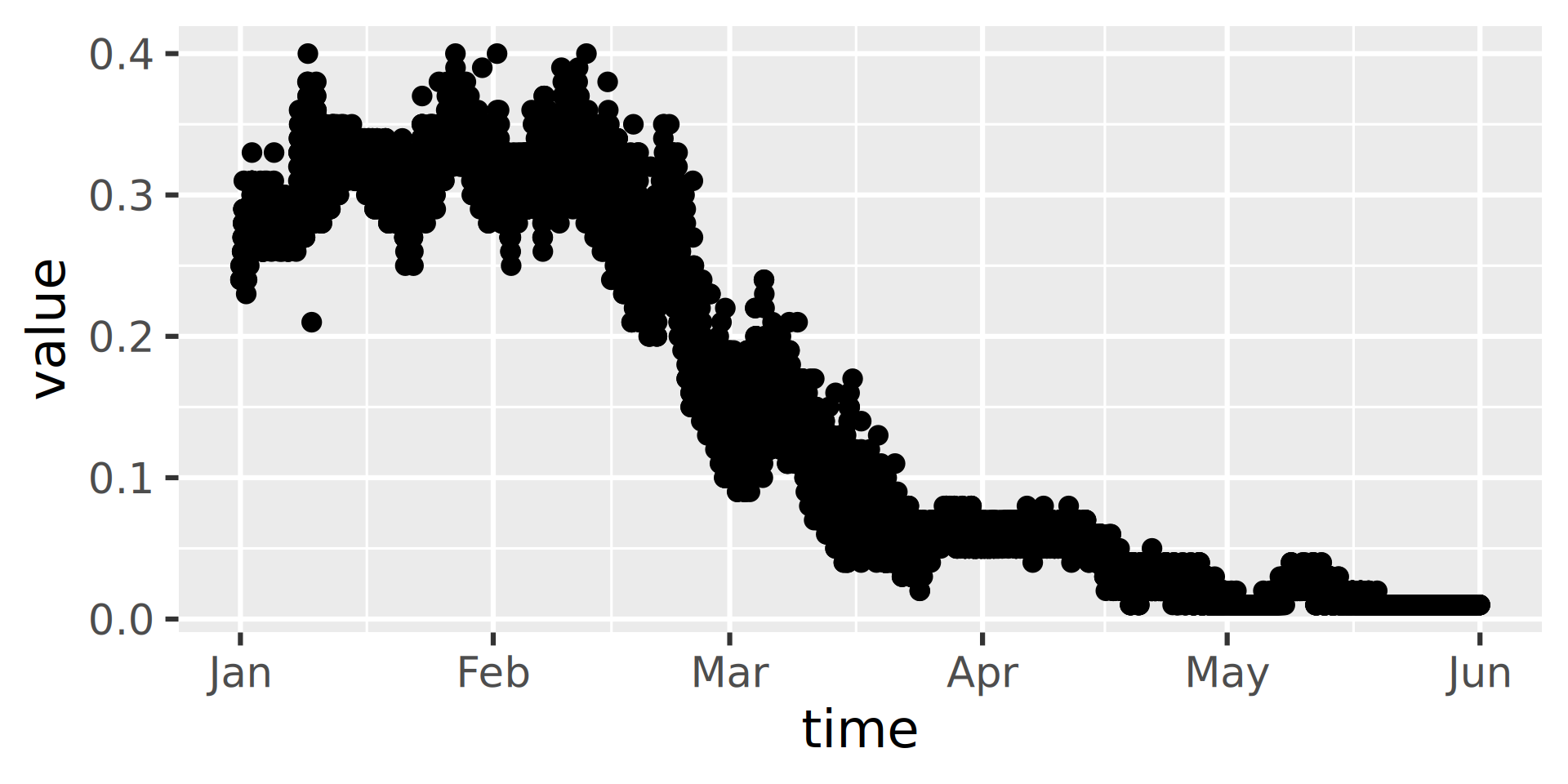

Workflow 3: Continuous data for known site

Continuous data is the high-frequency sensor data.

We’ll look at Suisun Bay a Van Sickle Island NR Pittsburg CA (“USGS-11455508”), with parameter code “99133” which is Nitrate plus Nitrite.

Workflow 3: Continuous data for known site

[1] "monitoring_location_id"

[2] "parameter_code"

[3] "statistic_id"

[4] "time"

[5] "value"

[6] "unit_of_measure"

[7] "approval_status"

[8] "qualifier"

[9] "last_modified"

[10] "time_series_id" Index(['time_series_id',

'monitoring_location_id',

'parameter_code',

'statistic_id',

'time', 'value',

'unit_of_measure',

'approval_status',

'qualifier',

'last_modified',

'continuous_id'],

dtype='str')Workflow 3: Inspect

Data Discovery

read_waterdata_ts_metadiscovers available daily and continuous time seriesread_waterdata_field_metadiscovers available field measurementread_waterdata_combined_metacombines the time series and field measurement discovery. This function also provides the most flexibility with geographic queries.summarize_waterdata_samplesdiscovers discrete data at specific monitoring locations

The next slides will demo how to use those.

Data Discovery: Time Series

Data Discovery: Discrete

characteristicUserSupplied

- characteristicUserSupplied can be an input to

read_waterdata_sample

More Information

- dataRetrieval R repository:

- dataretrieval Python repository:

- Contact:

- Computational Tools Email: comptools@usgs.gov

Any use of trade, firm, or product name is for descriptive purposes only and does not imply endorsement by the U.S. Government.